Ever wondered what lurks at the bottom of your garden at night, or which furry friends are visiting the school playground once all the children have gone home?

Using a Raspberry Pi and camera, along with Google’s Vision API, is a cheap but effective way to capture some excellent close-ups of foxes, birds, mice, squirrels and badgers, and to tweet the results.

This article first appeared in The MagPi 71 and was written by Jody Carter

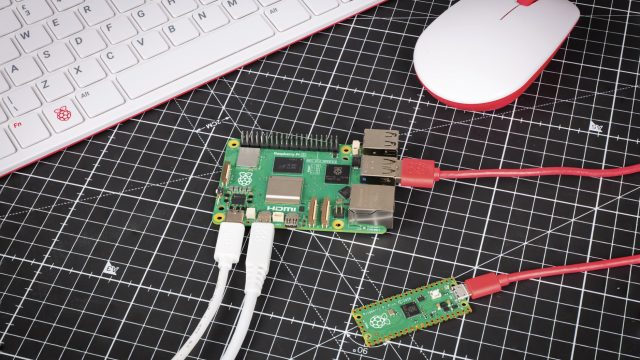

Using Google’s Vision API makes it really easy to get AI to classify our own images. We’ll install and set up some motion detection, link to our Vision API, and then tweet the picture if there’s a bird in it. It’s assumed you are using a new Raspbian installation on your Raspberry Pi and you have your Pi camera set up (whichever model you’re using). You will also need a Twitter account and a Google account to set up the APIs.

You'll need

- Pi Camera Module

- Pi NoIR Camera Module (optional)

- ZeroCam NightVision (optional)

- Waterproof container (likea jam jar)

- Blu Tack, Sugru, elastic bands, carabiners

- ZeroView (optional)

Motion detection with Pi-timolo

There are many different motion-detection libraries available, but Pi-timolo was chosen as it is easy to edit the Python source code. To install, open a Terminal window and enter:

cd ~ wget https://raw.github.com/pageauc/pi-timolo/master/source/pi-timolo-install.sh chmod +x pi-timolo-install.sh ./pi-timolo-install.sh

Once installed, test it by typing in cd ~/pi-timolo and then ./pi-timolo.py to run the Python script. At this point, you should be alerted to any errors such as the camera not being installed correctly, otherwise the script will run and you should see debug info in the Terminal window. Check the pictures by waving your hand in front of the camera, then looking in Pi-timolo > Media Recent > Motion. You may need to change the image size and orientation of the camera; in the Terminal window, enter nano config.py and edit these variables: imageWidth, imageHeight, and imageRotation.

While we’re here, if you get a lot of false positives, try changing the motionTrackMinArea and motionTrackTrigLen variables and experiment with the values by increasing to reduce sensitivity. See the Pi-timolo GitHub repo for more details.

There’s also going to be some editing of the pi-timolo.py file, so don’t close the Terminal window. Code needs to be added to import some Python libraries (below), and also added to the function userMotionCodeHere() to check with the Vision API before tweeting. We can do this now in preparation of setting up our Google and Twitter API. You should still be in the Pi-timolo folder, so type nano pi-timolo.py and add the imports at the top of the file. Next, press CTRL+W to use the search option to find the UserMotionCodeHere() function and where it’s called from. Add the new code into the function (line 240), before the return line. Also locate where the function is being called from (line 1798), to pass the image file name and path. Press CTRL+X then Y and RETURN to save. Next, we’ll set up the APIs.

import io import tweepy from google.cloud import vision from google.cloud.vision import types from google.cloud import storage

Animal detection and tweeting

We will be using Google Label Detection, which returns a list it associates with the image. First off, you will need to install the Google Cloud Vision libraries on your Raspberry Pi, so type pip install --upgrade google-cloud-vision into your Terminal window. Once finished, run pip install google-cloud-storage.

Now you need authorisation, by going to the Cloud Vision API site to set up an account. Click on the Manage Resources link and create a new project (you may need to log in or create a Google account). Go to the API Dashboard and search for and enable the Vision PI. Then go to API & Services > Credentials, click on Create Credentials > Service Account Key > New Service Account from the drop-down. Don’t worry about choosing a Role. Click Create and you’ll be prompted to download a JSON file. You need this as it contains your service account key to allow you to make calls to the API locally. Rename and move the JSON file into your Pi-timolo folder and make a note of the file path. Next, go back to pi-timolo.py and add the line: os.environ["GOOGLEAPPLICATIONCREDENTIALS"] = "pathtoyour.jsoncredential_file" below import os to reference the credentials in your JSON file.

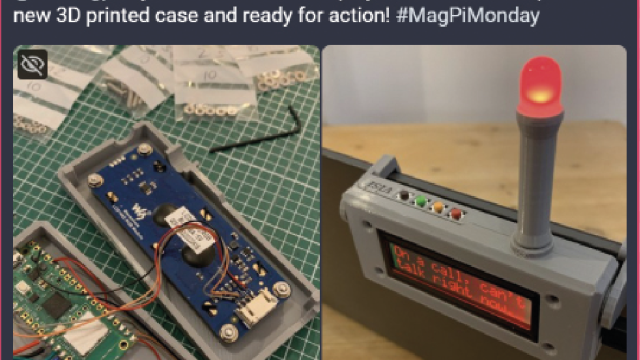

Finally, set up a Twitter account if you haven’t already and install Tweepy by entering sudo pip install tweepy into a Terminal window. Once set up, visit apps.twitter.com and create a new app, then click on Keys and Access Tokens. Edit the code in userMotionCodeHere() with your own consumer and access info, labelled as ‘XXX’ in the code listing. Finally, place your camera in front of your bird feeder and run ./pi-timolo.py. Any pictures taken of a bird should now be tweeted! If you want to identify a different animal, change the line if "bird" in tweetText: animalInPic = true.

Please note that although the API works well, it can’t always discern exactly what’s in the picture, especially if partially in view. It also won’t distinguish between types of bird, but you should have more success with mammals. You can test the API out with some of your pictures at the Vison API site and visit twitter.com/pibirdbrain to see example tweets. Good luck and happy tweeting!