If you’re a fan of ’80s pop, you’ll have heard the Yamaha DX7. It’s one of the best-selling synthesizers, and its built-in sounds can transport you to the era of big hair and neon leggings. One reason it is evocative is that so many artists used those preset sounds, to the point of them becoming a cliché. The synth is based on frequency modulation (FM), and it could take a few days to create a good patch (or sound). Many chart-toppers took the easy way out.

This article was written by Sean McManus and appears in The MagPi #65.

“I’m nuts about FM synthesis,” says Richard James, who creates electronic music as Aphex Twin. “The first proper synth I got was a DX100 and I’ve always thought there’s got to be a more interesting way to program the damn things than laboriously going through all the hundreds of parameters. Even though I quite like doing that anyhow, hehe.”

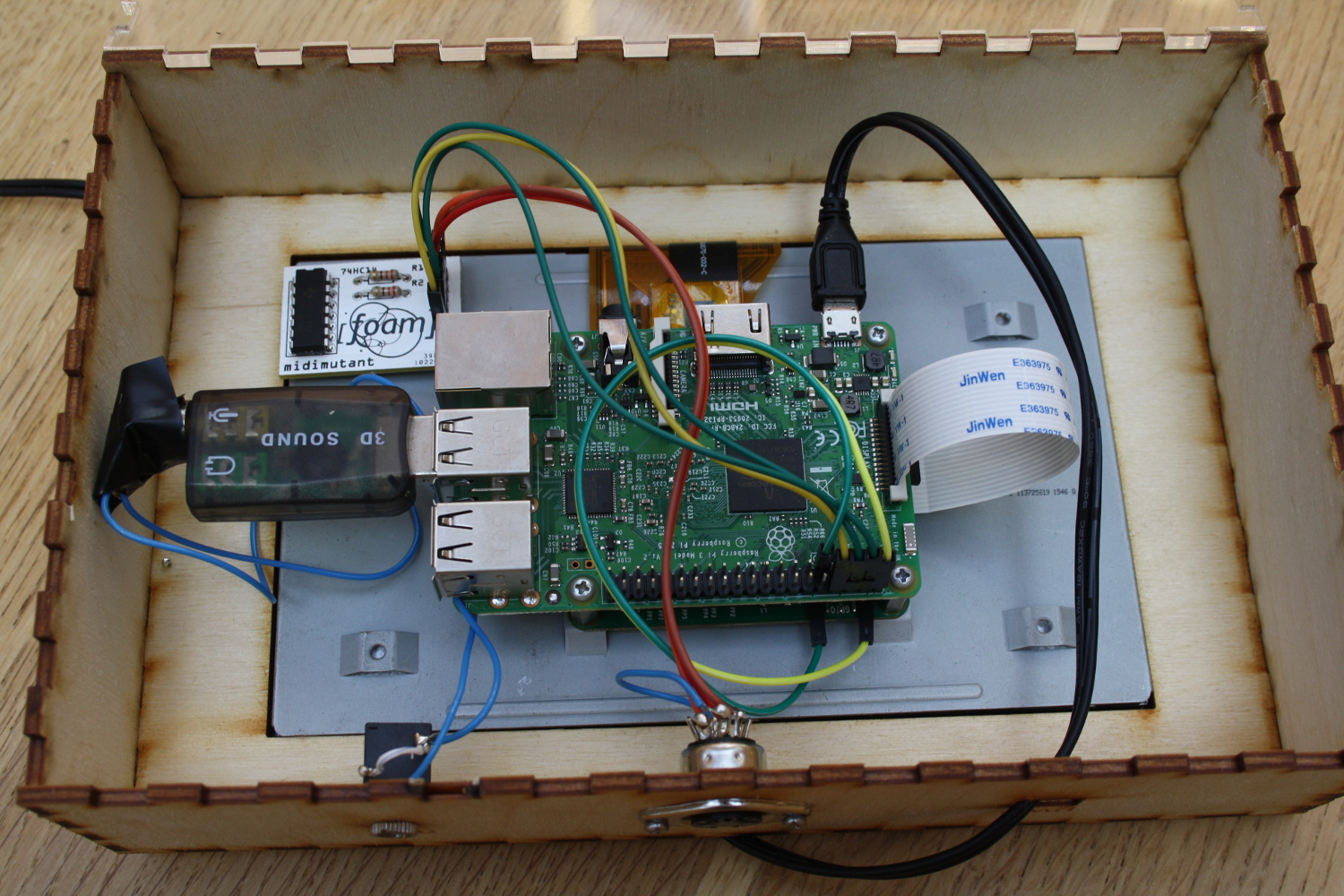

A conversation with his friend Dave Griffiths led to Dave building Midimutant, a Raspberry Pi-based device that programs the synth for you. “This is something you want to leave running for hours,” says Dave. “The Raspberry Pi is essential because you can make a stable setup that isn’t going to start updating itself and reboot.”

The idea came from a lost feature on the Kyma synth, based on the work of Andrew Horner, which enables sounds to evolve. Midimutant uses a similar approach: you give it a sound, and it aims to recreate it on the Yamaha TX7, a version of the DX7 without a keyboard. “There’s nothing especially great about a TX7 apart from I love all forms of FM and that is a really small unit, so it’s a lot easier to handle than a DX7 or other FM devices I’ve got lying about,” says Richard.

Dave adds: “The DX7 is the ‘classic’ FM synth. If it works on that, it would work on anything.”

Random scoring

At the start, a population of random sounds is created. Each one is compared to the source sound using Mel-frequency cepstral coefficients (MFCC), a way of comparing sounds that comes from recent work on speech recognition.

Each of the random sounds is scored and ranked for its similarity to the source. The program gets rid of the lowest scoring half, and creates a new population by copying with errors (mutation) and crossbreeding (mixing together) the top half. “Repeat this process tens or hundreds of times and it usually (although not always) converges on something good,” says Dave. The most interesting sounds emerge from trying to match beats and voices.

One fascinating aspect of the project is that Midimutant doesn’t need to understand how the synth functions. It just needs to know how to format the (initially random) data so the synth can use it, and it uses the sound the synth produces to score the results.

The biggest challenge? “Listening to endless bonkers sounds for days on end, changing parameters slightly and waiting ages to see if there was an improvement,” says Dave. “Actually, that was quite fun…”